Flawless Coordination

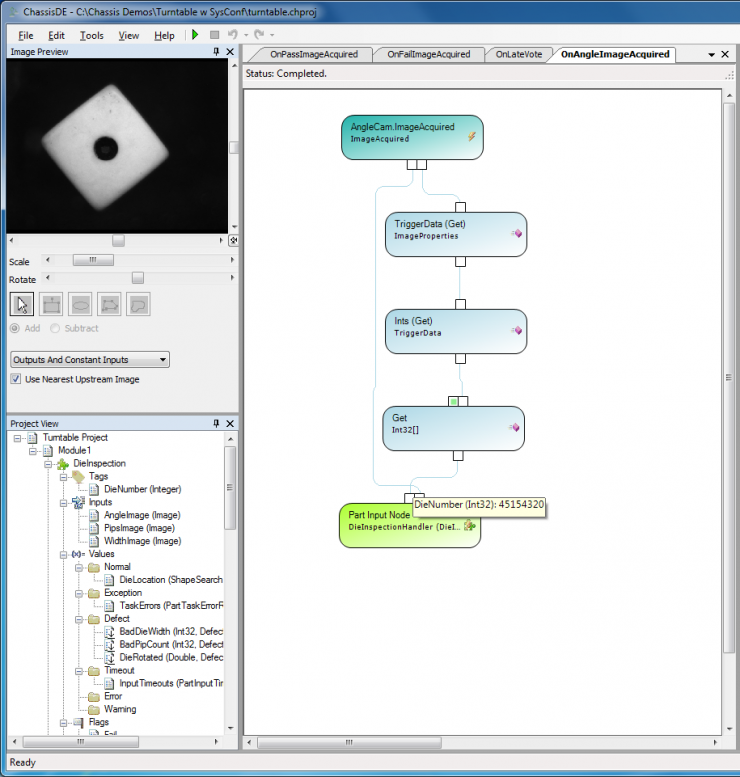

Opteon systems provide completely deterministic results by optionally delivering application specific data specified by the user that can be unique to each part to be inspected as a component of the image data.

A challenge for all machine vision systems is the inevitable requirement to coordinate the timeless environment of the computers required to process image data and the real world time frames of manufacturing machinery and their associated sensors and actuators. It is a divide that conventional machine vision systems have never adequately spanned. Consequently their outputs remain inherently uncertain and their decisions less than reliable.

Lacking a deterministic method of linking image data to the particular part to which it applies, camera and machine vision manufacturers have capitulated and universally rely on the “time” or “order” of arrival of images to associate them with the “right” part being inspected. Unfortunately neither time nor order have real or consistent meaning in modern computers. For all but the slowest inspections (< 1 per second) these approaches fail with some frequency on any multi-tasking operating system, including without exception “real time” OSs.

While this problem has persisted since multi-tasking operating systems became dominant in the ’80s, it has been greatly exacerbated by multi-core computers that may reduce the processing time for images, but actually increase the level of uncertainty regarding which image data should be associated with which part to be inspected.

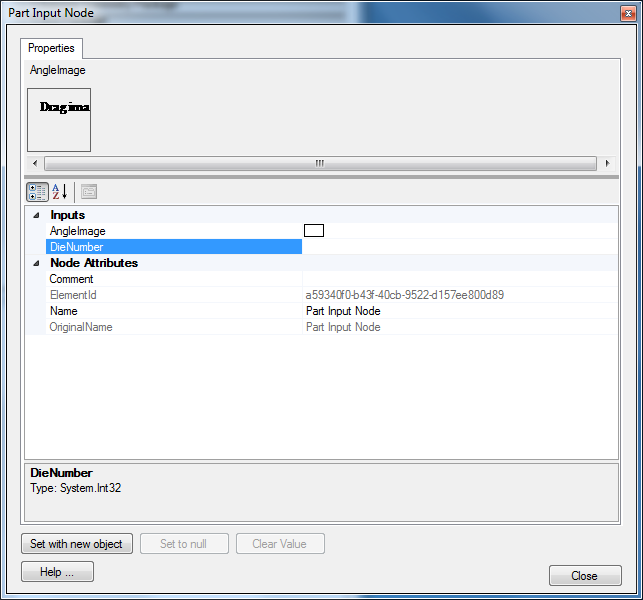

Metadata generated in the real time environment and delivered with each image to the host computer provides an elegant solution to this problem with perfect determinism. Opteon systems can deliver, as a component of each image, application specific data defined by the machine builder that is unique to each part to be inspected. This means that any code object operating on an image can always be aware of exactly which part this image represents.

Metadata unique to each part can be generated by industry standard Programmable Logic Controllers where they are fast enough, or from Opteon system coordinators with a 1 µS guaranteed response time. Information from both types of sources from multiple locations in your machine can be combined for each image.